Better models for understanding AI using Fish, Coconuts and Terminators looking like Will Smith

I promise this will make sense in the end.

Week after week the discourse and hand wringing around AI somehow manages to reach a new all time high. Case in point: this past week a substack post briefly tanked the public markets.

A very quick rundown of what happened in case you somehow missed it:

Citrini Research (a short seller with positions in many of the firms they referenced) put out a hypothetical future memo in which they spelled out the ultimate doom and gloom death spiral of AI taking white collar jobs, the economy collapsing and the government unable to respond.

Most of the companies mentioned in the research saw stocks selloff, the wider market followed

Basically every mainstream publication wrote some version of “omg a substack post triggered a big selloff in the markets”

Many people had strong reactions, tons of rebuttals were written (Ben Thompson, Noah Smith, Citadel, etc.)

The memo itself is pretty interesting if you only view it as a thought experiment about how bad things can get and don’t interpret as an oracle predicting the future which most people seemed to do.

All of this really gets at three questions people can’t agree on:

Is AI actually getting as good as fast as people say?

Is AI going to cause mass unemployment of white collar workers and destabilize the world economy?1

Is this all going to end with humanity being wiped out?

Is AI actually getting as good as fast as people say?

For building a mental model of why people think advancements in AI are happening so quickly, I recommend this post, which begins with this parallel:

Think back to February 2020.

If you were paying close attention, you might have noticed a few people talking about a virus spreading overseas. But most of us weren’t paying close attention. The stock market was doing great, your kids were in school, you were going to restaurants and shaking hands and planning trips. If someone told you they were stockpiling toilet paper you would have thought they’d been spending too much time on a weird corner of the internet. Then, over the course of about three weeks, the entire world changed. Your office closed, your kids came home, and life rearranged itself into something you wouldn’t have believed if you’d described it to yourself a month earlier.

I think we’re in the “this seems overblown” phase of something much, much bigger than Covid.

Writing this today, I realize I come off as the equivalent of the guy telling you to hoard toilet paper in early 2020 and you think I'm crazy and I’m ok with that.

The crux of the argument he goes on to make is that the coming phase change is evident mostly to engineers writing code. AI is really great at coding. The big AI companies have first focused on making AI really great at writing code because if you can write good code 100x faster, you can build the rest of the stuff you need to build 100x faster and the growth of what AI can do overall will compound. Engineers writing code with AI are best positioned to understand how fast things are advancing because they are on the bleeding edge of what will happen everywhere else.

The growth in quality and speed of AI writing code will eventually happen everywhere. But you might say, programming is a discrete task! It can’t come for all other human cognitive tasks. Well, there is a lot of independent testing data saying things aren’t just moving fast in other areas, progress is rapidly accelerating:

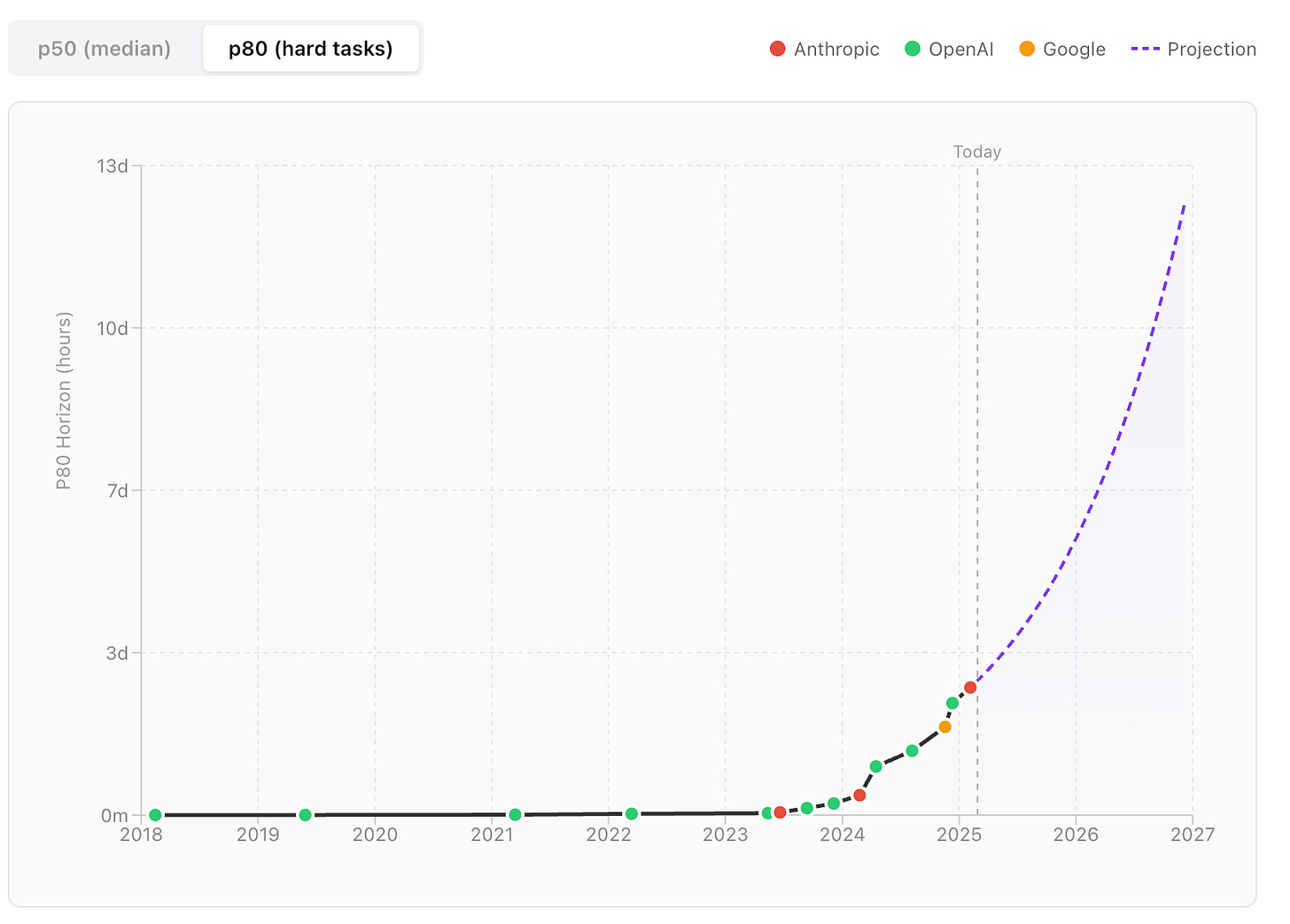

This graph2 shows the current rate of change3 extrapolated forward about two years (which is actually increasing in speed but I’m ignoring that for now). The engineers using AI to write code are so close to this they are witnessing the jumps in effectiveness in every model. They aren’t saying AI is taking over because AI coding is getting good, they are saying it is taking over because this rate of change is happening for most human cognitive tasks at the same rate they are seeing it progress programming. The engineers writing code have the best up close seat to witness the change because programming as a use case is at the forefront of progress.

Is AI going to cause mass unemployment of white collar workers and destabilize the world economy?

In economic terms, can advancement of AI lead to negative growth? And, how bad can negative economic growth get? A professor from the University of Chicago attempts to answer the question here. He begins by laying out the scenario in very simple terms:

Imagine there’s an island with 100 workers and 10 capital owners. Everyone consumes two types of goods, fish and coconuts. Workers catch fish and harvest coconuts using nets and ladders provided by the capital owners. Workers are paid wages for their labor and spend everything they earn on the two goods. Revenue goes directly to the owners. The owners are wealthy, and because a person can only eat so many fish and coconuts, they consume 10 of each per day and have their fill.

Now let’s say fishing gets automated. Someone invents self-operating nets that catch fish without workers. Workers are fully displaced from fishing and move on to harvesting coconuts, which still requires labor. Workers still earn wages, and because fish has now become much cheaper, they may be even better off than before as their earnings can now buy them more of the two goods. Output rises in this economy: owners respond to demand by investing in nets and labor is now fully devoted to coconuts.

But suppose someone invents an automatic harvester that picks coconuts, and there are enough of these harvesters to fully replace workers in the groves as well. Both sectors are now fully automated and the workers cannot be re-deployed elsewhere. They earn nothing and spend nothing. What happens to output? Well owners are still satiated with 10 fish and coconuts each. That’s 200 units of total demand. Machines are sitting sitting idle: they could produce 10,000 units, and even more if owners would invest it building more. But why would the owners bother if there’s no one to buy the output? In this economy, full automation led to negative growth because of demand collapse.

He goes on to say in the real world conditions for negative growth certainly do exist, but he deems them unrealistic. He examines two models and basically says it won’t happen because labor displacement won’t be large enough and adoption of AI technology won’t be fast enough.

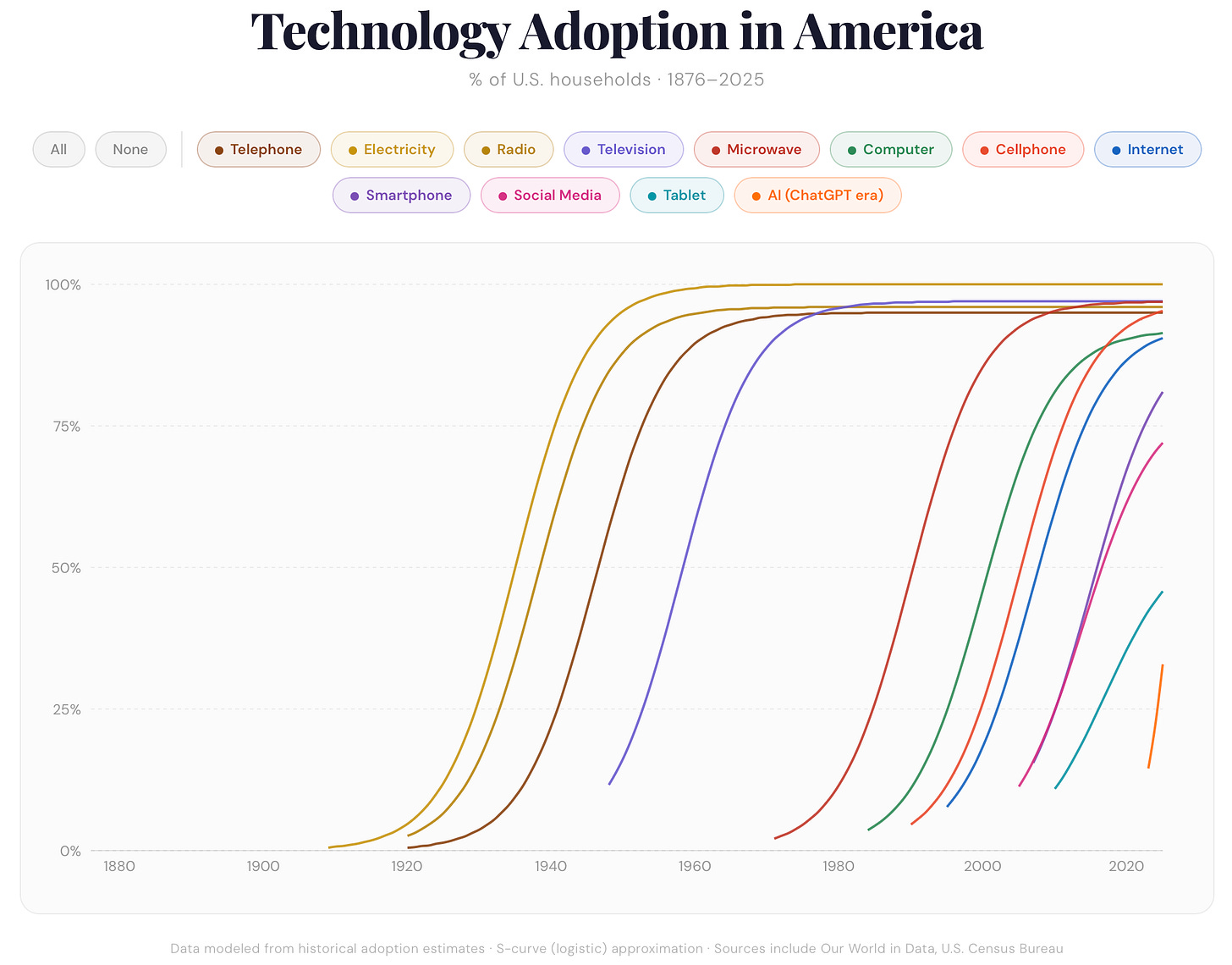

To me these are reasonable critiques but problematic because they rely on historical cases. The counter argument is that AI is so unprecedented in terms of growth and power that it will break existing thinking about economics. If you look across waves of adoptions of major technologies, each is faster than the one before. AI adoption has already proven to be faster than prior technology cycle adoptions, will it be orders of magnitude faster? I think so.

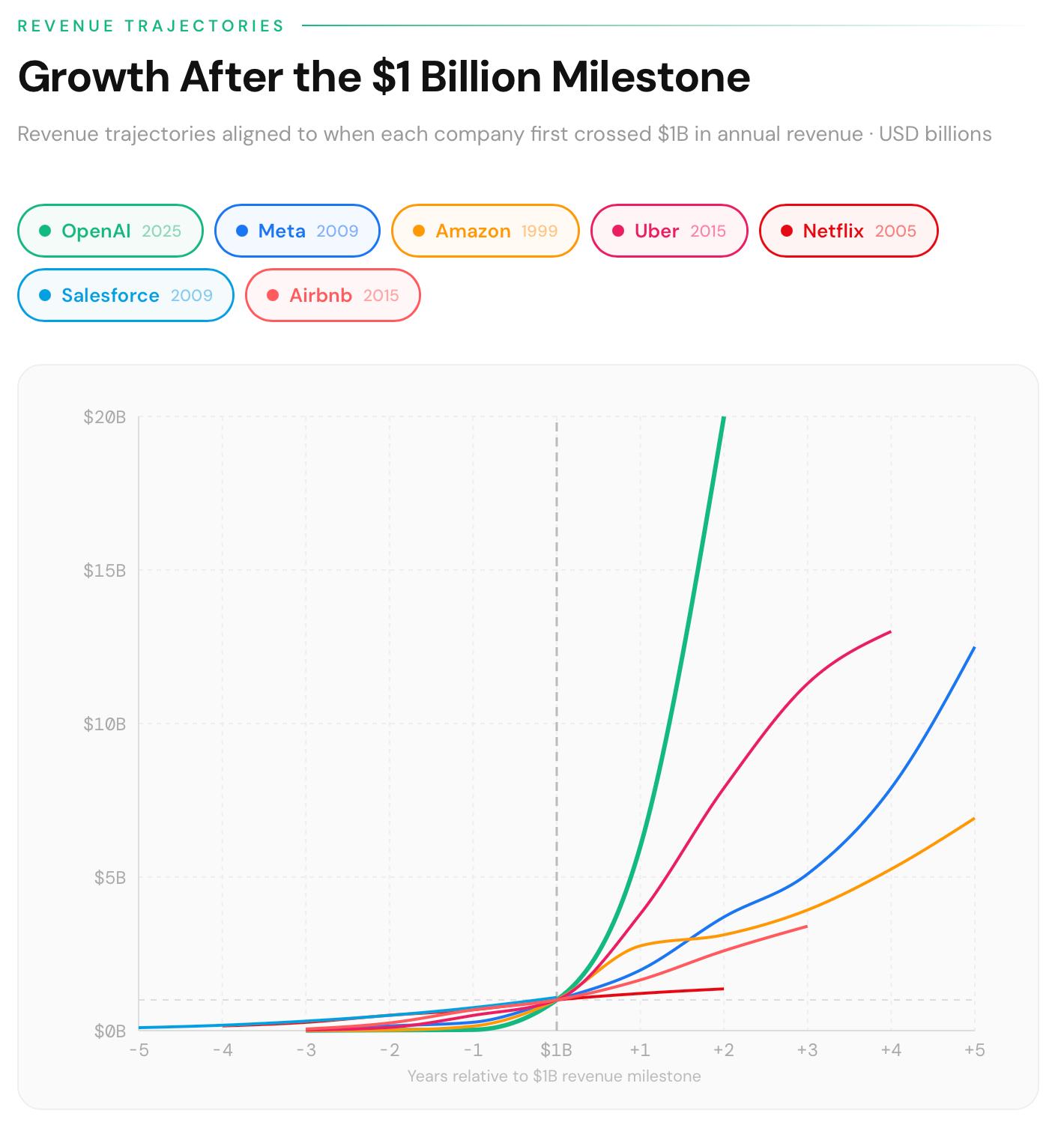

Compared to other rapidly growing tech companies that changed the world, AI (using only the largest company’s revenue as a measure) is also growing faster than anything we’ve ever seen before.

The signs of AI being bigger, faster and more disruptive than any other technology that has come before are there, but the truth of how far reaching this will be is likely not quite as extreme as the Citrini Research memo suggests. I think many, but not all of the scenarios in the memo are likely to come true and much sooner than people realize, but we won’t reach a full blown state where Sam Altman owns all the world’s fish and coconuts.

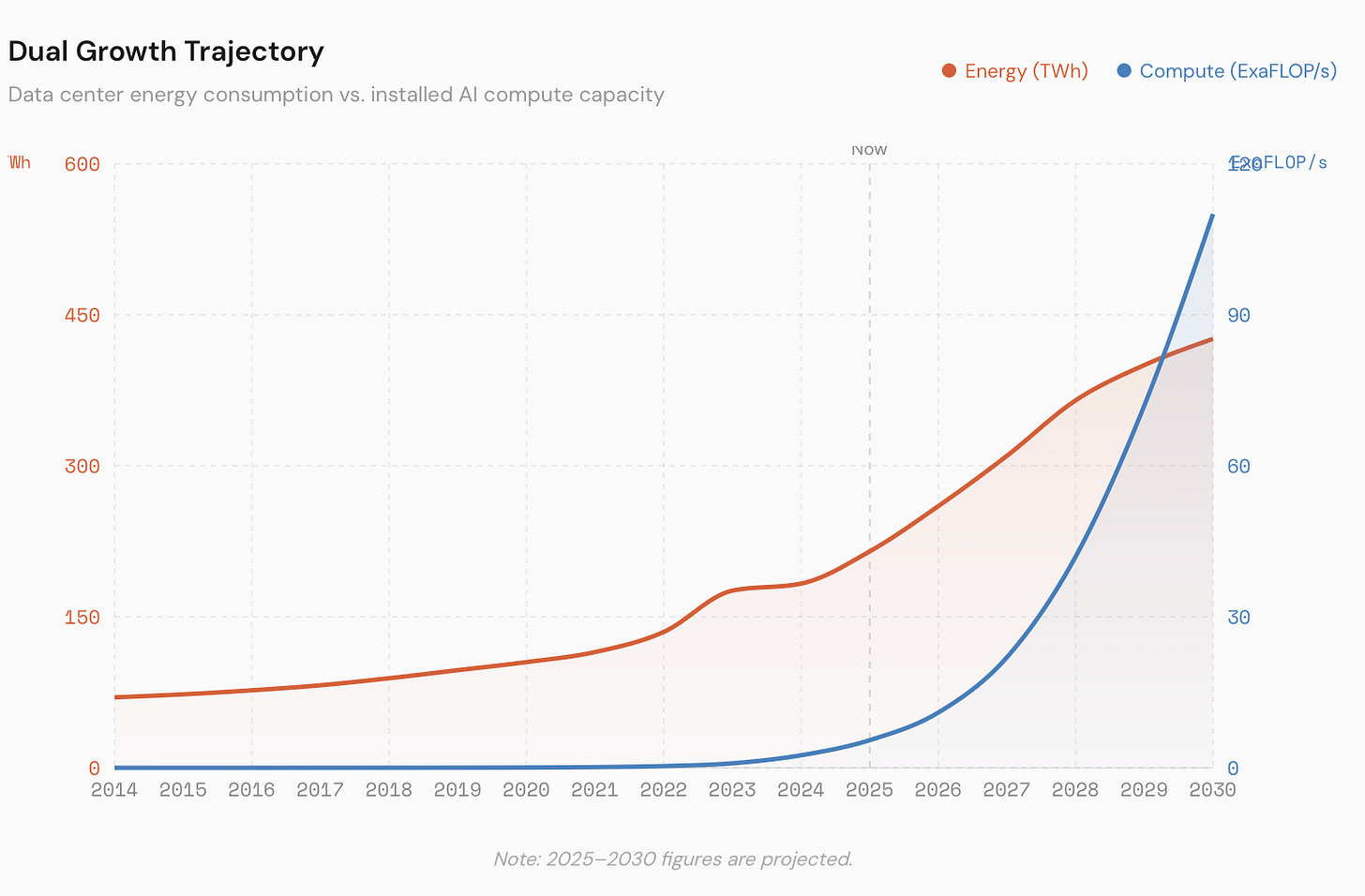

This is because there are still two pretty big constraints to how fast the AI companies can grow which are namely energy and compute.

Seems like we are on pace to get there on the compute front but it also seems like energy becomes a constraint4. It’s hard to say where energy constraints land, does it actually slow down compute at some stage or does energy just get really expensive for the average person? Do we finally as a country invest more in nuclear? Probably yes. Do we finally get a fusion breakthrough? I have no idea.

With all of this in the background, how does the average company react? Do AI agents replace all white collar workers overnight and we wake up to mass unemployment one day?

Early signs are that companies are going to be encouraged to get more lean, have smaller workforces and lean more on AI. There is a real chance this becomes self-fulfilling where the market responds to favorably to companies that do this, other companies also do it, even if they aren’t actually in a position to effectively do more with less people and more AI.

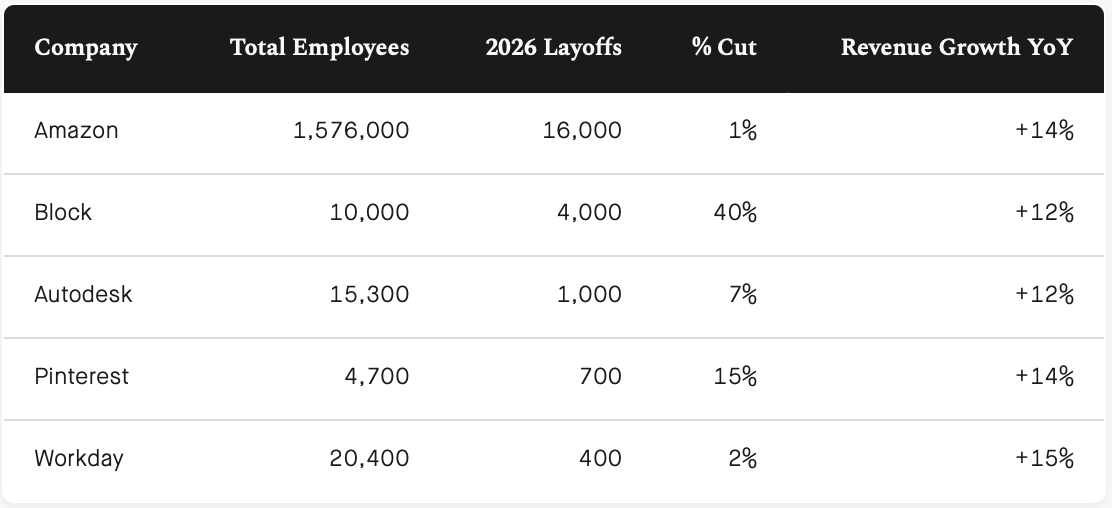

Jack Dorsey’s fintech company Block announced they were letting go of 40% of their workforce last week and he said:

Within the next year, I believe the majority of companies will reach the same conclusion & make similar structural changes. I’d rather get there honestly & on our own terms than be forced into it reactively.

Block drawing a line in the sand at 40% is huge. Is this is what a healthy company consistently growing revenue is doing, what should the struggling ones do?

Every CEO & Board in America is looking at Jack’s statement asking if they should do the same and get out ahead of the inevitable. Especially if the market will just reward them with a share price bump.

Is all of this going to end in economic collapse? Like I’ve said in the past, the more pessimistic people will continue to say “it just hasn’t happened YET” and the more optimistic folks will continue to say “yeah because it’s not happening EVER.” The only ways for this to resolve are either: doom scenarios happen or a really long time goes by and we stop talking about it.

Why is AI going to wipe out humanity in the form of Terminators that look like Will Smith?

Wait, what?! What does Will Smith have to do with this? Read on, I promise this will make sense.

For the average person reading this who has never and will never write a line of code in their life, they still might not be convinced this rate of improvement is happening in other areas. So here’s an example in video format. Same as for coding, generative AI for video is also advancing at an ever faster rate.

Three short years ago, a video of Will Smith went viral. In the AI generated video, Will Smith is eating spaghetti…very poorly. On one hand it was impressive that this was created from a single text prompt5, but on the other hand…no one was getting fooled into thinking this was real.

The viral video turned into a benchmark of the quality increases of generative AI’s ability in video. Three years and a couple of models later, here is how far we’ve come:

It is hard to deny the progress. The Will Smith Spaghetti Test advancing is a much better means of showing progress for the average person than engineers saying “programming is getting pretty good” because even to me, a person who can program, that’s like saying “AI doing quantum physics is getting good.” My reaction is…ok that’s great I guess? The video advancement feels so much more tangible.

But what about the Terminators? Well underpinning a lot of the anxiety about AI isn’t just does it somehow wreck the economy, it is a fear of turning into Skynet being real and the Terminator films going from fun science fiction to being accurate predictions of our future.

In one of the middling 2000’s films in the series, Terminator Salvation, the human resistance in the future eventually makes it to a factory where Terminators are being built. Enter rows and rows of Arnold Schwarzenegger Terminators. Beyond the obvious reasons of the franchise wanting Arnold to continue to come back for sequels, I always wondered by Skynet would make most of the Terminators look the same. What if there was a practical reason?

I’d like to think in a world where AI turns into AGI (artificial general intelligence), it gets loose and decides to turn on humanity it will have a sense of humor. Despite the ridiculously complicated effort that would go into building machines that can transport in time, I’d like to think in some future scenario humanity locked in an endless struggle with Will Smith’s.

Imagine a scene fifty years from now: a young child asks, “why do all the terminators look like that guy.” The child’s parent lets out a long sigh and says, “we don’t know what event set us on this path, the only thing that matters is stopping them.”

Jokes aside, despite the growth of Gen AI my view is that actual AGI still seems very far off. I’m not sure the deterministic LLM path gets us there. And for what it’s worth, the most popular theory in Silicon Valley is not Skynet, but that someone invents hyperrealistic robot AI girlfriends which causes our population growth to collapse6.

Personal odds and ends: I’ve stopped doing standalone posts about stuff I’m building or reading, so here’s a brain dump.

Building: Here’s a demo video of a F1 Circuit Designer I should finish and release soon. If you want to test it, let me know. I finally revamped my personal web site. I built a proof of concept of a movie trivia game but don’t love how the typeaheads have to work in practice and it isn’t very fun, so I abandoned the idea.

Doing: There’s a new sim racing venue in Brooklyn I’ve been enjoying, if you stop by, tell the owner Matt I recommended it. Or if you want to visit, let me know.

Reading: What’s happening the crypto market? Institutions are extracting value and retail is chasing volatility elsewhere.

Working: We launched an update to the Gravity Labs site which offers more detail about what we are doing and some of our recent projects.

Remember when everyone was concerned about a bubble a few short months ago? How quaint. Bubble burst risk is still very real if the energy and compute build out cannot keep pace.

Technically this is task length but I think this is a close enough proxy for general improvement, if you dig through the METR site, you can find all sorts of more specific studies.

Another thought experiment: is there any problem where if most of the brightest minds in the world were focused on it and they were given trillions of dollars and basically no constraints but also the motivation that they would be the richest people ever could they solve it? Probably Yes? We’ve put people on the Moon. We did the Manhattan Project. Humanity can do some amazing things when focused with the right incentives.

In this big debate I think people are losing sight of the fact that if AI advancement stopped today and never went further this would still be one of the most transformational technologies of the last 25 years.

If it seems fantastical, watch this underrated movie.

I have friends that rely on AI for financial guidance. Dangerous.